ECS is a great way to run containers in AWS, especially when using Fargate as the infrastructure management plane. When running backend microservices in this way, it is natural to assume that they should be deployed in a private subnet, which is where the fun begins. Tasks are the execution jobs in ECS and are essentially a host for a container. Think Pods in Kubernetes. To run a task on ECS, you first create a task definition which references one or more containers. These containers reside on a container registry, Dockerhub or Elastic Container Registry. When the task begins to initialise, it must first download the container from the registry before it begins to run. Now, if we analyse the network required here, it should be clear that we need access from a private subnet to the container registry. For this, I’ll assume the container registry is Elastic Container Registry (ECR). This leaves us with our first decision: do we keep our network traffic private, or do we use a public endpoint over the internet?

1. Private Network Traffic

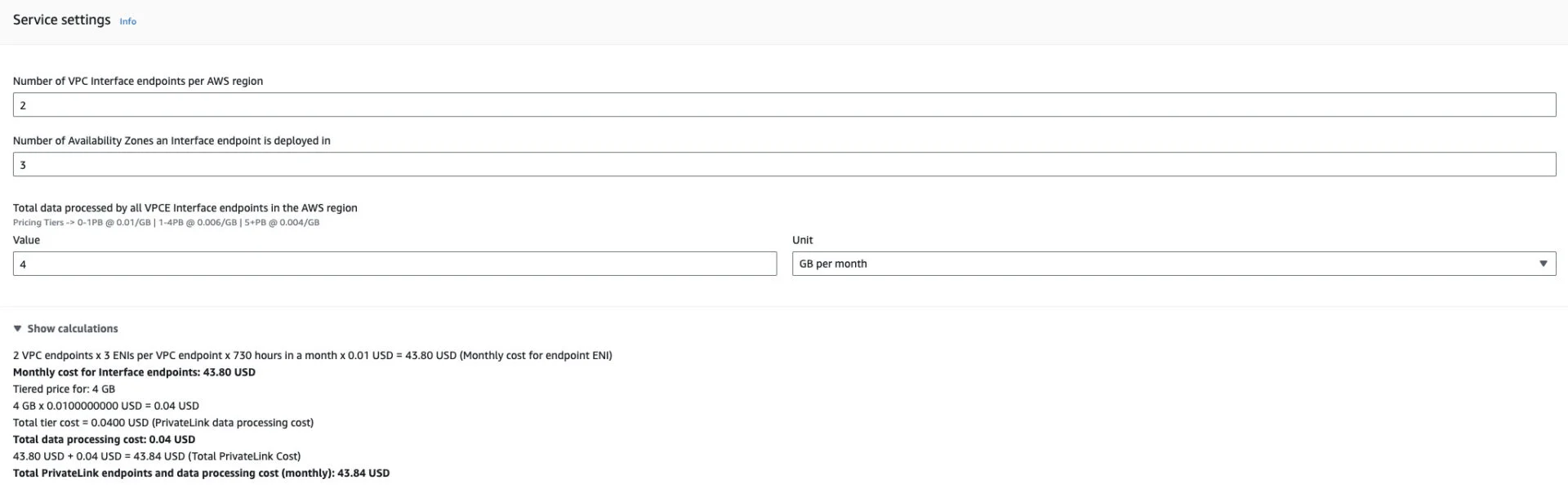

To achieve private network traffic from ECS Tasks to ECR we must use VPC (Interface) Endpoints. VPC Endpoints are implemented using AWS Privatelink. Although outside the scope of this blog, it essentially provides access to services outside the VPC using an ENI, which can have a security group and endpoint policy for additional security attached. Since we are using Fargate, the latest platform version is 1.4 and following the AWS documentation, this requires 3 VPC Endpoints to work with ECR. Two interface endpoints for ECR and One Gateway endpoint for S3 where the image layers are stored. Great, 3 endpoints and we have private network traffic from ECR to our ECS tasks. Let’s have a quick tot up of the costs we’d incur here. First the assumptions, as usual, we’re assuming 3 AZ’s in the us-east-1 region. I’ve assumed 4 deployments of 250MB a week, resulting in 4GB a month of deployments. Here, I’ve created an AWS Cost Calculator to represent these 3 VPC Endpoints. A keen eye might notice there are only 6 VPC Endpoints costed. This is because the VPC Gateway endpoint used by S3 has no charge.

There is no additional charge for using gateway endpoints.

Ok, for this setup we’re essentially spending $43.84 a month. Or are we…..????

2. Public Network Traffic

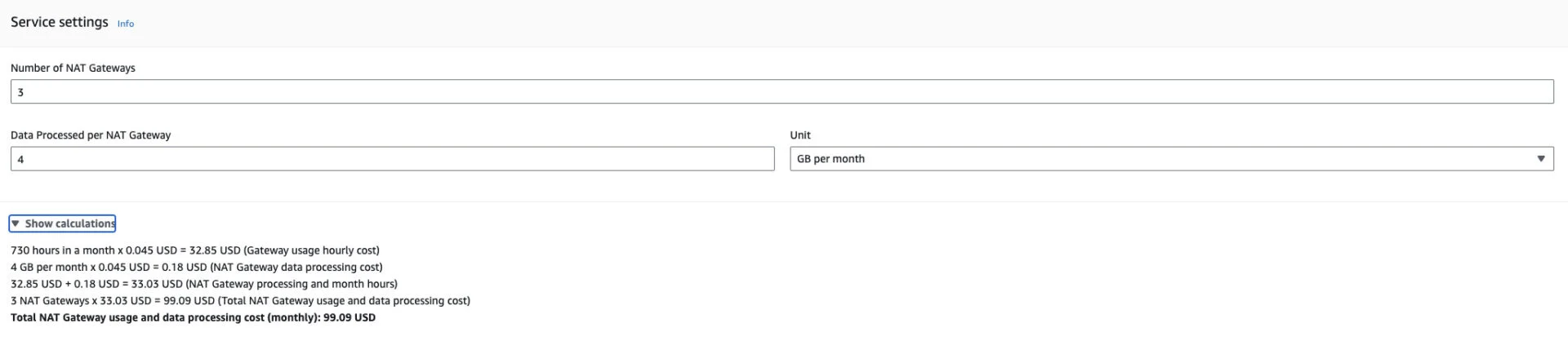

If we are not concerned with allowing our traffic outwards over the public internet then we can use NAT’ing to allow access from the private subnet to the internet while preventing internet traffic accessing your subnet. Deploying an AWS NAT Gateway in each availability zone and directing internet bound traffic to the NAT Gateway using route tables achieves this. Lets look at the breakdown of costs using a NAT Gateway type architecture.

Making the same assumptions as the first scenario and deploying a NAT Gateway to 3 AZ’s in the us-east-1 region we calculate the costs to be $99.09 a month. More than twice the price.

So $43.84 verses $99.09 a month… no brainer eh ?? Well think again…

When Reality Bites

You see what we’ve calculated here is the cost to download the container image, nothing more.

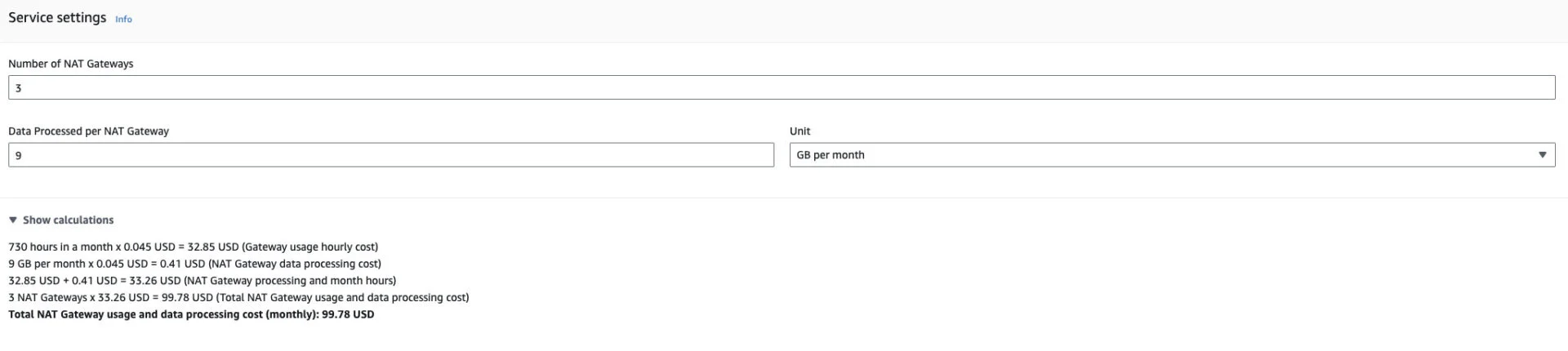

At a very minimum, you likely want to include logging (Cloudwatch) and tracing (XRay). Let’s assume an additional 5GB a month for this traffic.

For the public Network Traffic option, the new price is $99.78 an increase of 69 cents.

Service Network

Looking at the Private Network Traffic option, we’ve increase the price to $109.59 an increase of $65.75

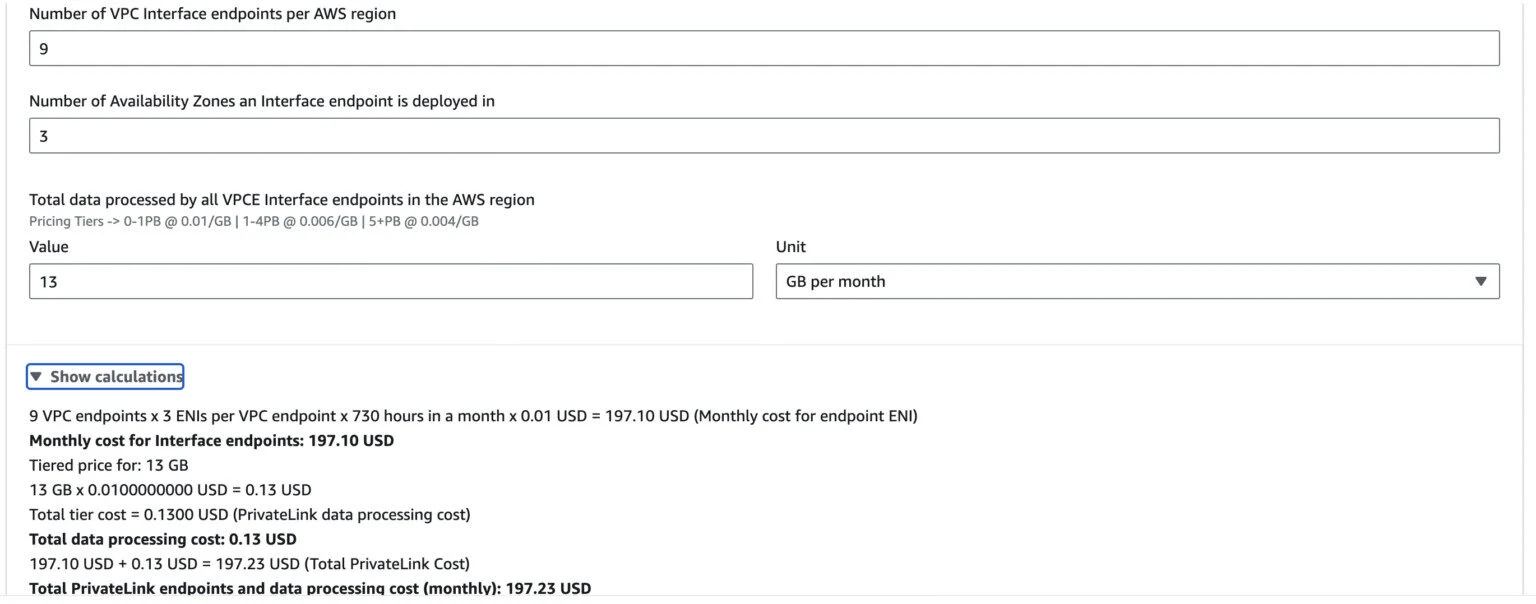

Well any useful application is going to need to additional services to interact with. Let’s throw in a database (Aurora), a queue (SQS), encryption (KMS), parameters and secrets, (SSM). Now each service that is not deployable to a VPC requires a new VPC Endpoint using private link to grant network access. A full list of services of the VPC Endpoints can be found here. Let’s recalculate assuming 1GB per month for each of these services. No prizes for guessing the results.

The price of the public network now reaches $100.29, while the private network reaches a monthly cost of $197.23.

A quick rule of thumb is that each VPC Interface Endpoint (every service thats not S3 or DynamoDB) costs about $22 per month for 3 AZs.

Here is a link to the cost calculator

💡 Couple this with a large multi account type architecture and the bills stack up quickly!

This is one of those rare situations where the instinctive cost-effective architecture to keep the data inside AWS doesn’t hold true.

So let’s look at why you might want to continue with this approach and what options you have to reduce the cost.

1. Compliance Reasons

The obvious reason is “Security Says NO!”. Often, it is a requirement that backend services are prevented from accessing the outside world and for good reason. Bad actors exist everywhere (Just standup a public ALB and monitor the access logs if you like a fright!). This is perfectly fine but as we’ll see theres a better approach when using large multi account type architectures. On top of that, the VPC interface endpoint supports security groups and endpoint policies that can be used for fine grained access control that is not as easily managed with a public access network. On the other hand, you are not able to associate a security group with a NAT Gateway.

2. Performance Reasons

With the public network approach all the traffic is going through the NAT Gateway in each AZs. A NAT Gateway instance can support 5Gbps scaling up to 100Gbps. If you require greater throughput than this, then the recommendation is to create new subnets and deploy new NAT Gateways to these subnets. By default you have have 5 NAT Gateways per AZ which in theory could be 15 NAT Gateways for 3 AZ’s providing up to 1.5Tbps. After this a quota increase can be requested.

With the private network approach, we can have up to 50 VPC Gateway and Interface Endpoints per VPC. Each VPC endpoint can support 10Gbps scaling up to 100Gbps. So our potential throughput for a VPC is 5Tbps. This quota can be increased by making a request to AWS.

What can we do?

Ok, so assuming that we must keep the traffic private what are our options?

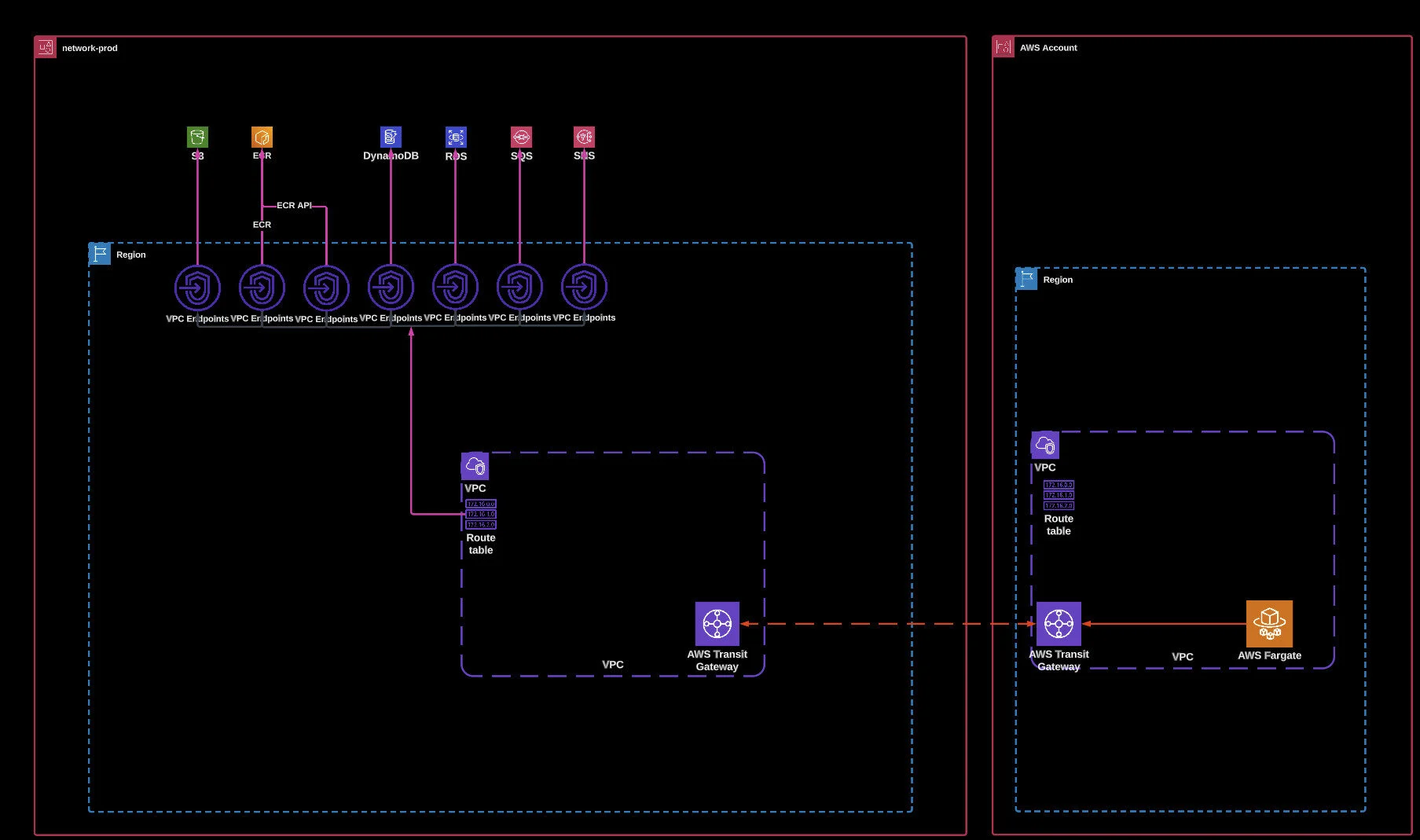

Well, in a large multi-account architecture we can reduce the number of VPC interface endpoints required by using a centralised services pattern. The architecture is shown in the diagram below.

Access to the VPC Endpoints is gained through a transit gateway attachment. This can drastically reduce the number of endpoints required but comes with trade-offs.

Tradeoffs

1. Transit Gateway Required

If you wish to implement this architecture, it requires internal VPC peering, which comes at an additional cost. Let’s say, for argument’s sake, we had 10 AWS accounts, each with 8 VPC Interface endpoints in 3 AZs. With the cost calculator this comes to $175.33 per month per account so roughly $1750 per month in total.

Using the shared VPC endpoint model, we’d add the transit gateway attachments and have a single account hosting the VPC interface endpoints. This would result in one account paying $175.33 and a bill of $367.60 for the 10 Transit Gateway attachments. However, if you are using a multi-account architecture its highly likely that transit gateway is part of your existing infrastructure and TGW bill can be attributed to other running bills.

2. Performance

As we have seen, we can have up to 50 VPC endpoints per VPC. With a multi-account architecture, it is likely that we will have many VPCs, allowing us to use more than 50 VPC endpoints. Consolidating these endpoints into a single account means that we are limited by the number of VPCs in that account multiplied by 50 VPC endpoints. Therefore, the bandwidth for the VPC endpoint is shared among the accounts rather than being dedicated to a particular VPC. On top of this, we are also introducing Transit Gateway which comes with its own bandwidth limits. A Transit Gateway attachment has a bandwidth limit of 5Gbps which is not adjustable. This potentially reduces the speed effectiveness of the VPC Endpoint quite a lot.

3. Security

Using security groups and endpoint policies is less effective since they can only restrict access to the gateway attachment and not lock down individual services. This reduces the control you have over fine-grain security access.

However, it must be mentioned that you are not stuck with either a centralised or decentralised architecture when it comes to VPC endpoints. You are free to mix and match to create a hybrid architecture, getting the best of both worlds, where the needs demand.

Summary

To summarise, there is no one correct choice or universal answer. Performance, Security, and Cost all factor into the scenario. It’s a combination of what’s necessary and what works inside cost parameters.

I hope this gives you some insights into the choices that need to be considered when deploying a Fargate application.

References

Highly recommended talk from Laura Caicedo https://www.youtube.com/watch?v=LNf8jjBt72Y

AWS Documentation:

https://docs.aws.amazon.com/vpc/latest/userguide/amazon-vpc-limits.html

https://docs.aws.amazon.com/vpc/latest/privatelink/vpc-limits-endpoints.html

https://docs.aws.amazon.com/xray/latest/devguide/xray-security-vpc-endpoint.html

https://docs.aws.amazon.com/vpc/latest/tgw/transit-gateway-quotas.html

https://docs.aws.amazon.com/vpc/latest/userguide/vpc-nat-gateway.html

https://aws.amazon.com/blogs/networking-and-content-delivery/centralize-access-using-vpc-interface-endpoints/

Thanks to Conor Maher for his contributions and feedback to this article.

If you have a question or need help with your ECS costs, don’t hesitate to reach out to us, we’d love to help!