AWS is increasing the max memory for Lambda from 3008 MB to 10240 MB with a linear increase in CPU.

With the adoption of serverless, Lambda has taken an important role in our distributed systems. They require little and simple setup, will scale independently without the need for configuration and you’re only charged for the time of execution and memory allocated.

This argument can in many circumstances win a discussion when choosing where to deploy our functions. But, is Lambda always good for the job? You’ve might have come across a situation where Lambda could not cope well with a specific task, like using a headless Chrome for data scraping or trying to do image processing where the memory limit of 3008 MB is just not sufficient and it doesn’t give you enough CPU power:

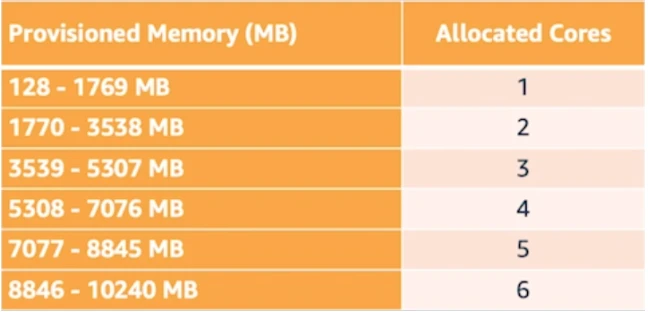

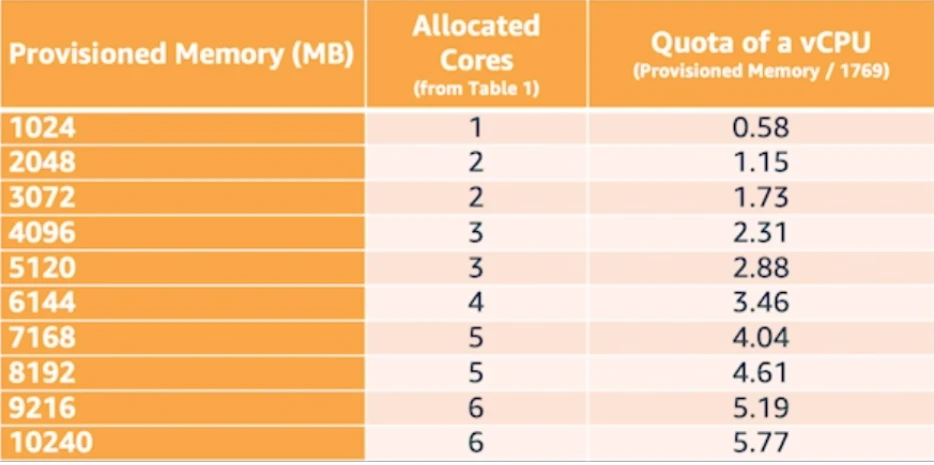

“Lambda allocates CPU power linearly in proportion to the amount of memory configured. At 1,792 MB, a function has the equivalent of one full vCPU (one vCPU-second of credits per second).”

Should I then choose Fargate for the job?

When there’s the need to execute tasks that require more memory or CPU power, Fargate was usually the best alternative for Lambda. You can configure your containers to have up to 4 vCPUs and 30 GB of memory allocated. While this gives you more computing power, it also brings extra challenges that need to be accounted for:

- Fargate is billed on CPU and memory used per hour, including the time spent to pull the container image from the registry.

- Scaling speed: Fargate can scale up 10 containers in the first second, but then it will take 2.3 seconds per container thereafter

- The overhead of maintaining ECS clusters and VPC

- Idle containers charges: You will be paying for idle containers until they are scaled down.

Will the 10 GB memory limit increase avoid the need to migrate to Fargate?

For a fair amount of cases, it will. But first, you need to check the pricing impact that will have on your monthly bill. AWS is maintaining same pricing as before (it just linearly increases $0.0000166667 for every GB-second).

That means that configuring your Lambda with 10240 MB will be 10 times more expensive than 1024 MB and probably end up being more expensive than running Fargate and therefore harder to convince the CFO, right?

Well, there are extra costs when running your containers with Fargate that will not reflect on the AWS bill:

- Maintenance of the ECS cluster

- Developer time setting up and maintaining the scaling of containers

When using Lambda, you get this out of the box plus high availability, fault tolerance and integration with more than 140 other AWS services with no extra cost. There used to be discussions and concern when needed to run Lambdas inside a VPC, but this has been improved recently and might no longer be a problem for your case.

What are the main benefits?

- Running intensive workloads such as machine learning, media processing applications and analytics.

- Increate function performance by increasing memory and getting a proportional increase in CPU at the same time

- Running high compute or memory intensive workloads

- Remove operational overhead from running the application on Fargate or EC2

Key Performance Data

The tables below illustrate how CPU power is proportionally allocated alongside memory for our Lambda functions.

Things to take into consideration

Sometimes it’s easy to forget some principles and end up over-engineering our Lambdas, so keep this in mind if you’re planning to boost your Lambdas with memory and CPU power:

- Avoid creating a monolith. Your functions should be kept simple and execute one particular task. Don’t bring your whole Express.js API into one single Lambda.

- More CPU power means more network bandwidth. If you’re fetching data from an API or S3, remember not to overwhelm other services with too many requests. Otherwise you’ll end up throttled or exceeding the quotas.

AWS is also moving the billing from 100ms to 1ms. This is a good indicator that your functions should follow the KISS principle and avoid unnecessary costs.

Get in touch to share your thoughts.